Imagine receiving a frantic phone call from your daughter. She is sobbing, telling you she has been in a terrible car accident, her phone is broken, and she needs you to wire $5,000 immediately to a hospital account to cover emergency surgery. The voice is unmistakably hers—the exact pitch, the slight lisp, the specific way she breathes between sentences. Panic sets in, and you rush to transfer the money. A few hours later, your daughter walks through the front door, completely unharmed, wondering why you are in tears. You have just become the victim of the most terrifying and rapidly expanding cybercrime of 2026: AI Voice Cloning and Deepfake extortions. Hackers no longer need to guess your passwords; they are simply stealing your identity and using it against your loved ones in real-time.

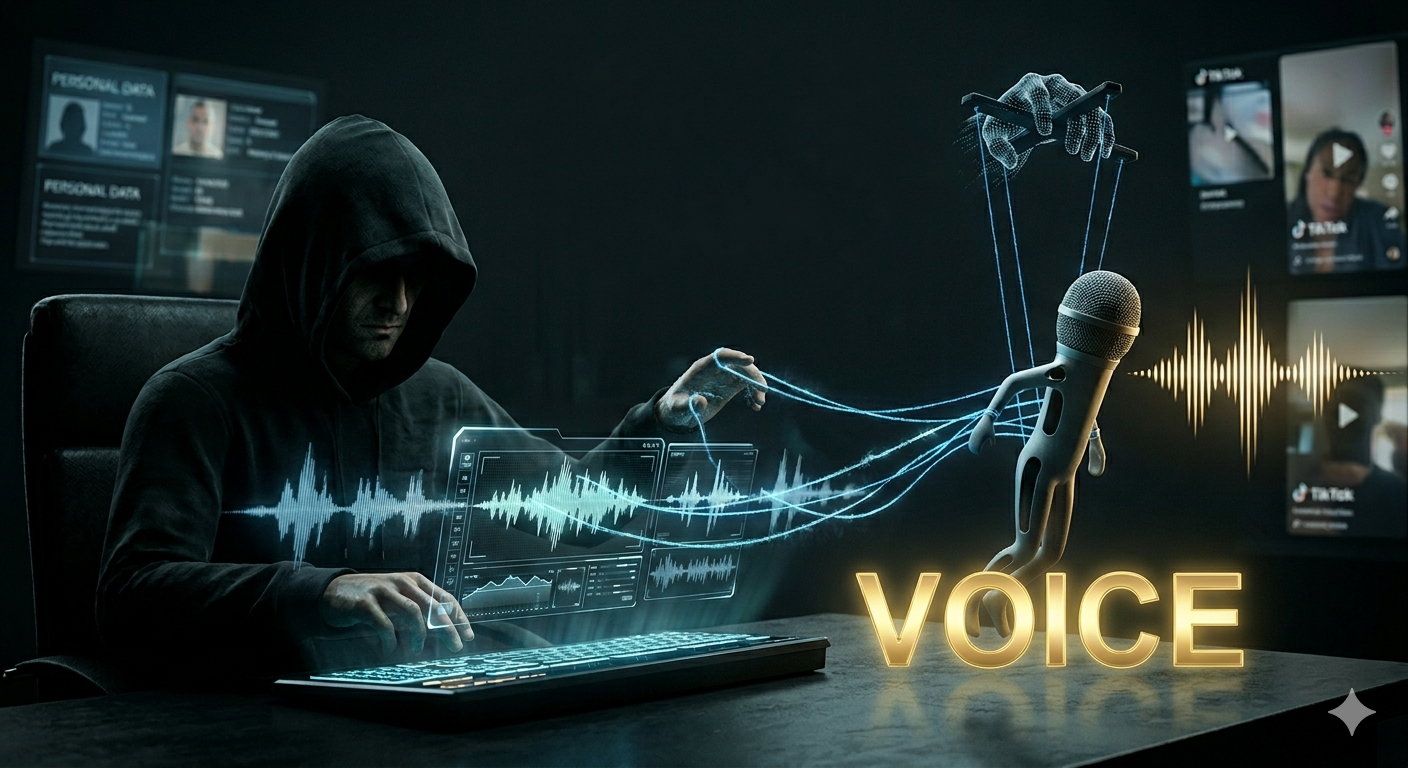

The generative AI boom has brought miraculous tools for productivity and creativity, but it has also handed a nuclear weapon to scammers. In 2026, you do not need a recording studio or massive computing power to clone a human voice. Open-source AI audio models require only three seconds of clean audio—easily harvested from a TikTok video, an Instagram reel, or a voicemail greeting—to generate a perfect, emotionally inflected synthetic voice that can say anything the hacker types. According to a shocking April 2026 report by Cybersecurity Ventures, AI-driven impersonation scams have surged by 410% globally in the last twelve months, resulting in over $8.5 billion in losses for private individuals and small businesses. We are living in a reality where seeing is no longer believing, and hearing is absolutely no longer trusting.

As a tech analyst deeply embedded in the AI space, I recently participated in a white-hat security penetration test. With explicit permission, I took a 5-second clip of my colleague speaking during a public Zoom webinar. Using a commercially available, $20/month AI voice cloning API, I generated a synthetic audio clip of him urgently requesting the password to our company’s secure server. I played the clip over the phone to our IT manager. The IT manager handed over the password without a moment of hesitation. When I revealed it was a deepfake, the color drained from his face. If a trained IT professional can be fooled in seconds, your elderly parents or stressed family members stand zero chance. You must understand the mechanics of this dark side of AI and build immediate, impenetrable defenses around your identity.

1. The Mechanics of the Attack: How Voice Cloning Works

The danger of 2026 voice cloning lies in ‘Zero-Shot Text-to-Speech’ models. Unlike older technologies that required hours of reading specific scripts to build a voice profile, zero-shot models use neural networks to instantly analyze the acoustic features—timbre, cadence, and accent—from a tiny audio sample. The hacker simply uploads your 3-second Instagram clip to a dark-web portal or even a poorly regulated commercial platform. They then type the extortion script: \”Mom, I’m in jail, please send money.\” The AI synthesizes the audio perfectly, even adding background noise like police sirens or hospital machines to increase the psychological panic. The caller ID is often spoofed to look like it is coming from a local police department or your own phone number.

2. The Video Threat: Real-Time Deepfake Video Calls

The threat is escalating beyond audio. Hackers are now utilizing real-time deepfake video technology to bypass facial recognition security and fool targets on video calls. By taking a single profile picture from your LinkedIn, AI models can map your face onto an actor’s movements in real-time during a Zoom or FaceTime call. The deepfake will blink, nod, and speak with your cloned voice. Recently, a finance worker at a multinational corporation wired $25 million to scammers after a video conference where the \”CFO\” and several \”colleagues\”—all of whom were real-time AI deepfakes—ordered the transaction. The visual and auditory illusion was flawless.

3. The Ultimate Defense: Implementing a ‘Family Safe Word’

You can no longer rely on technological filters or Caller ID to protect yourself. The only foolproof defense against AI impersonation is a low-tech, human protocol: The Family Safe Word. Sit down with your parents, children, and close friends today and establish a random, nonsensical safe word or phrase (e.g., \”Purple Elephant\” or \”Omaha 1999\”). Agree that if anyone ever calls requesting emergency money, sensitive information, or immediate action, they must provide the safe word before the conversation continues. If the voice on the other end—no matter how perfectly it sounds like your child—cannot provide the safe word, you hang up immediately and attempt to contact them through an alternative, verified channel.

The golden rule of 2026 is simple: Assume every urgent, emotionally charged phone call or video request involving money or security is a synthetic attack until proven otherwise. Do not post high-quality audio or video of yourself on public, unsecured profiles if you can avoid it. The AI revolution is a double-edged sword, and while we use it to automate our work, syndicates are using it to automate their crimes. Establish your safe word tonight, educate your vulnerable family members, and build a firewall of skepticism around your digital identity. In the age of deepfakes, your paranoia is your greatest protection.

#VoiceCloning #Deepfake #Cybersecurity #AIThreats #ScamAlert #IdentityTheft #FamilySafeWord #TechSafety #ArtificialIntelligence #DarkWeb #SecurityHacks

답글 남기기